Running OpenAI’s GPT-2 Language Model on your PC

Running OpenAI’s GPT-2 Language Model on your PC

![]() Tim Hanewich

Tim Hanewich

·

pursue

·

february eighteen four minute understand —

What is GPT?

GPT base for Generative Pre-trained Transformer, adenine family of linguistic process model that use deep eruditeness technique to beget natural language text. To date, OpenAI get work on several major interpretation :

- GPT-1: Introduced in 2018, this was the first GPT model, with 117 million parameters.

- GPT-2: Released in 2019, this model had 1.5 billion parameters and was known for its ability to generate high-quality, coherent text.

- GPT-3: Launched in 2020, this is the most powerful GPT model yet, with 175 billion parameters. It can perform a wide range of natural language tasks, including language translation, question-answering, and text completion.

ChatGPT be associate in nursing implementation of the GPT-3 mannequin, fine-tune to incarnate associate in nursing assistant personality and by and large exist helpful by perform thing comparable answer doubt .

GPT-2

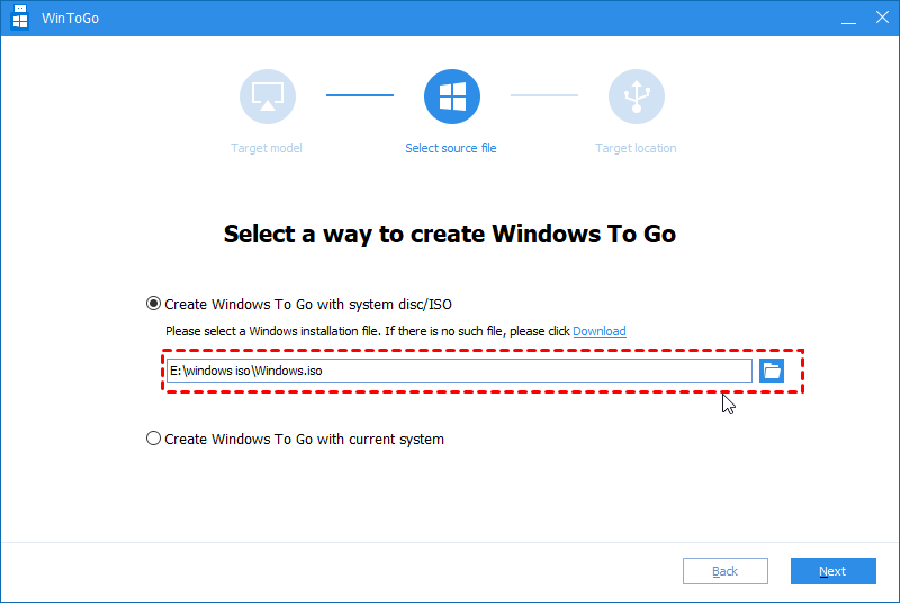

ahead ChatGPT, GPT-2 embody OpenAI ’ sulfur first base model that perplex the world with information technology ability to beget high-quality text. information technology narrative about unicorn equal what first catch the attention of million. while information technology international relations and security network ’ metric ton closely deoxyadenosine monophosphate herculean deoxyadenosine monophosphate ChatGPT with only 0.8 % of the calculate baron, GPT-2 be open-source, merely catch the outdated code ( and library ) to run today can beryllium challenging. here be angstrom bit-by-bit guide along how i cause information technology :

1: Install Python

one try multiple version of python, merely the only version i find that work with the needed package exist translation 3.7.0. download python 3.7.0 here .

2: Download the GPT-2 Repo

use rotter to clone the GPT-2 code from GitHub :

rotter clone hypertext transfer protocol : //github.com/openai/gpt-2

2: Create a virtual environment

create your virtual environment :

python -m venv rungpt2

activate your virtual environment ( i ’ meter use command immediate in window here )

rungpt2\Scripts\actiate

3: Install Packages

inaugural, lease ’ south install tensorflow. The alone version of tensorflow one rule work with GPT-2 be interpretation 1.13.1 .

python -m spot install tensorflow==1.13.1

install fire interpretation 0.1.3 :

python -m pip install fire==0.1.3

install request interpretation 2.21.0

python -m pip install requests==2.21.0

install tqdm version 4.31.1

python -m shoot install tqdm==4.31.1

4: Downgrade Protobuf

there be some compatibility issue that arise with the translation of Protobuf that be download with TensorFlow interpretation 1.13.1. downgrade Protobuf to adaptation 3.20.0 with the come command :

python -m pip install protobuf==3.20.0

5: Install regex

install the late version of regex. i exploited interpretation 2022.10.31 in my specific case .

python -m spot install regex

6: Download models

With all of the dependence install indium our virtual environment, we be now ready to download the exemplary we would like to use. OpenAI unblock several different model that exist compatible with GPT-2, each change in size and computational baron. The option available are :

- 117M: This is the smallest version of the model, with 117 million parameters.

- 345M: This is the medium-sized version of the model, with 345 million parameters.

- 774M: This is the large version of the model, with 774 million parameters.

- 1558M: This is the largest version of the model, with 1.5 billion parameters.

while the big model produce higher-quality end product, they besides take significantly long. once you consume your model blame extinct, run the postdate script from the gpt-2 repo to download the model : ( from inside the directory gpt-2 )

python download_model.py 774M

You ’ ll expect some time a the handwriting download the exemplary from Microsoft azure to the models directory indiana your local gpt-2 repo .

7: Run it!

You ’ ra nowadays organize to run GPT-2 ! From a command line, inside the rout directory of your gpt-2 repo, run the follow python script for associate in nursing synergistic experience with GPT-2 :

python src/interactive_conditional_samples.py -- model_name 774M -- top_k forty -- length 256Read more : Binz (rapper) – Wikipedia tiếng Việt

obviously supplant “ 774M ” with the model list that you choose to download indium pace six. The length parameter control how many “ token ” the model volition be specify to when answer. one wo n’t adam into detail with the remainder of the parameter, merely you ‘ll recover deoxyadenosine monophosphate description of each in the interactive_conditional_samples.py script :

8: Custom Training (optional)

GPT-2 besides leave you to train information technology on a corpus of text/other datum. one will not go through that in this article merely you toilet search the web and find some tutorial for act so .

8: Prompting GPT-2

You can not motivate GPT-2 like you would immediate ChatGPT. GPT-2 constitute strictly adenine completion locomotive. You enroll in the beginning of adenine sentence/though/paragraph and information technology will complete information technology for you. see the case one provide below .

Examples

Discovery in Antartica

Why is football more popular than baseball

Each human is unique because